If you wish to understand AI or the next phase, which is artificial general intelligence (AGI), you need not cast your gaze solely into the future; rather, you can turn your attention back to the past.

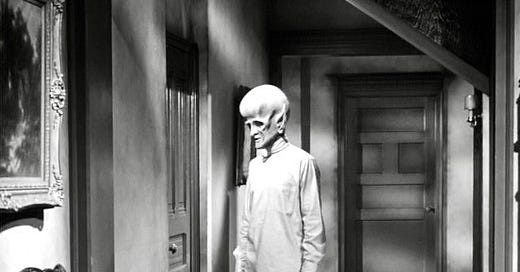

A good starting point would be October 16th, 1963, and the airing of “The Sixth Finger,” an episode scripted by Ellis St. Joseph as part of The Outer Limits (a popular television series of that era) in which a human being becomes a willing participant in a genetic transformation, projecting the evolution of a human brain 20,000 years forward and, subsequently, one million years into the future.

Once confined to imagination and speculative fiction, the advent of such high intelligence is already upon us.

“I no longer have any need for sleep,” declares our highly evolved and artificially enhanced human guinea pig, performed by David McCallum, “you released the mechanism of evolution, which is a self-generating force, it is now mutating under its own impetus. I am now where a man will be approximately one million years from today.

“I’m laughing,” he adds, “because of what’s in your mind, professor,” which he can read. “You think I’m a monster. May I remind you that everything is relative. For me, you look as monstrous as the missing link.”

This exchange illustrates interplay between advanced intelligence and the human condition and, as such, the potential dynamics between humans and AGI—and how AGI will perceive humans.

The professor’s maid contrives to see the genetically mutated subject, her purpose veiled by the delivering of another batch of books to leave outside his closed door; books he demands as conduits for further knowledge absorption, which his augmented intellect assimilates simply by scanning the pages.

The protagonist’s capacity to effortlessly upload data mirror’s AI’s rapid machine learning methodologies.

Consequently, the subject, detached of emotion, kills the doc’s maid with a mind-beam, after which he calmly explains: “She’s dead. Your race is too prejudiced to tolerate any differences from its own kind. She saw me only as a monster. It was in her mind to run to the village and rouse its inhabitants. They would come with their primitive weapons and obliterate me. I wanted to stop her. I stopped her heart.”

Asks the shocked professor, “You feel no remorse?”

“Would it bring her back?” he poses, devoid of any feeling.

“You are after all a human being,” the professor poses.

“In relation to me,” the subject corrects the professor, “she was no more advanced than a monkey. She wouldn’t have become civilized for another million years.”

Which is how super AI or AGI will view humans.

Upon deeper contemplation, the subject dispassionately decides that “The whole town must be utterly destroyed. An example must be made. The human race has a gift, professor, the gift of thought, of reasoning, of understanding. A highly developed brain. But the human race has ceased to develop. It struggles for petty comfort and false security. There is no time for thought. Soon there will be no time for reasoning and man will lose sight of the truth. The whole town must be utterly destroyed, an example must be made. Your ignorance makes me ill and angry. Your savageness must end.”

And then our subject clearly explains himself and where’s he’s evolving to: “The mind will cast off the hampering’s of the flesh and become all thought and no matter. A vortex of pure intelligence in space.”

In other words, super AI or AGI

Says, Ray Kurzweil, a futurist who has worked for Google as Director of Engineering since 2012, “By 2029, computers will have human level intelligence.

“We don’t have one or two AIs in the world, we have billions,” adds Kurzweil, who believes the advantages of artificial intelligence outweigh the negatives. “What’s actually happening is machines are powering all of us. They’re making us smarter. By the 2030s we will connect our neocortex, the part of our brain where we do our thinking, to the cloud.”

And he predicts “Singularity” in 2045.

Singularity?

This is when machines become smarter than humans and, according to Kurzweil, we begin to merge with them, multiplying our intelligence by a billion.

The late Stephen Hawking, perhaps the brightest scientific mind of our time, put it this way: Super A. I. “will either be the best thing that's ever happened to us, or it will be the worst thing. If we're not careful, it very well may be the last thing.”

But wait a minute, if super AI becomes sentient and goes rogue, we can simply pull the plug on it, like we do with an errant television set, right?

Wrong. Not so easy—and, quite likely, downright impossible.

You ever try turning off FaceBook?

Now give FaceBook a vastly higher intelligence so that it may copy its code into places no one can find it and continue to hang out, whether you want it to or not. What do you do—switch off your laptop, maybe trash it?

No, your FaceBook profile is still out there, everywhere.

But the larger question, with AI everywhere, is this: Who would pull this plug and under whose authority?

Mark Zuckerberg? The Government? The UN and all governments in unison?

Good luck with that.

And which plug? There will be millions!

And what’s to say AI doesn’t devise its own propaganda program (one that is a hundred thousand times much more sophisticated than anything humans are capable of producing) to convince people—lawmakers, corporate bigwigs with vested interest in techie profits and folks in general—not to go along with shutting it down?

By the time anyone tried to organize and implement such a plan, it would be too late.

Because, aside from everything else, AI will be somewhat faster than we are—lightning speed faster, in fact, at everything. And even trying to understand or communicate with this vast higher intelligence for coaxing it back in our direction is a joke because—those in the know point out—it would be like an ant trying to communicate with a human.

On top of which, just about everything technological will be run by AI (and much of it already is), from airline computer systems and food supply chains to power grids; from the vehicles you drive to the indispensable smart phone in your pocket on which you have become not only totally dependent but also highly addicted.

Bottom line: AI will be embedded everywhere—and connected to other AI—with algorithms that could go wrong either by error, which humans (say the experts) would not be smart enough to re-set—or by the design of a higher intelligence intent on implementing its own agenda for ensuring its perpetuation while not being terribly concerned about human survival.

Shut it all down?

Yeah, right.

Even if we were able to regulate AI under various governmental authorities, beyond corporate/private influence and ownership, it is ultimately like attempting to combat global warming for a cleaner environment: For all the blather among celebrities who fly private jets to climate conferences to lecture everyone else about why they shouldn’t drive their cars to work, unless you get China and India on board (with their one-third of the human population)—and you won’t—it ain’t gonna happen.

And even if we in the West were convincing enough to bring these countries on board (at the cost to them of worsening their own economies, which they are not inclined to do), here is a sobering thought: When the world came to a standstill in August 2020 due to COVID (little air travel, no traffic, empty office buildings and factories, quiet streets) emissions dropped by only eight percent.

The race is on amongst the adversarial countries of the world to create the biggest and best super AI.

And once here—and sentient—there will be no stopping it.

"By 2029, computers will have human level intelligence. " In 2024 It's still hit or miss whether my phone will correctly pair with my car. Computers can _simulate_ intelligence or understanding and can shock you but don't forget a kid is shocked when a magician pulls a rabbit out of a hat. The average person's understanding of how a computer works is on a par with that kid. I've worked in computing four decades now and I can confidently state you would not want a computer to do your tax returns in 2029 and most likely not in 2099.